Veeam and Pure Storage Integration

Veeam has a tight-knit integration with Pure Storage Snapshot which is now enhanced with USAPI-V2. Veeam Universal Storage API for Pure Storage has various options to perform a snapshot-based backup, replication, offloading the snapshot to NFS or S3 target, and the ability to perform the backup from replicated FlashArray. This is a huge integration advancement to simplify data protection while saving the IO bandwidth on the primary storage running mission-critical applications.

Pure Storage Veeam Plugin USAPI v2

One of the most significant features of Veeam Backup & Replication is the Universal Storage API, this is the framework that enables Pure Storage to integrate their storage to allow Veeam to take the volume level snapshots and later can be used to replicate it to another FlashArray via protection groups on the array level. The plugin is available on the Veeam site to download and install. For installation guide please refer to this link.

Once the plugin is installed, enroll the Pure Storage FlashArray primary and secondary (replica site) in the storage infrastructure on the Veeam server. Registering the storage enables Veeam to perform the volume snapshot, replication, or backup and recovery options.

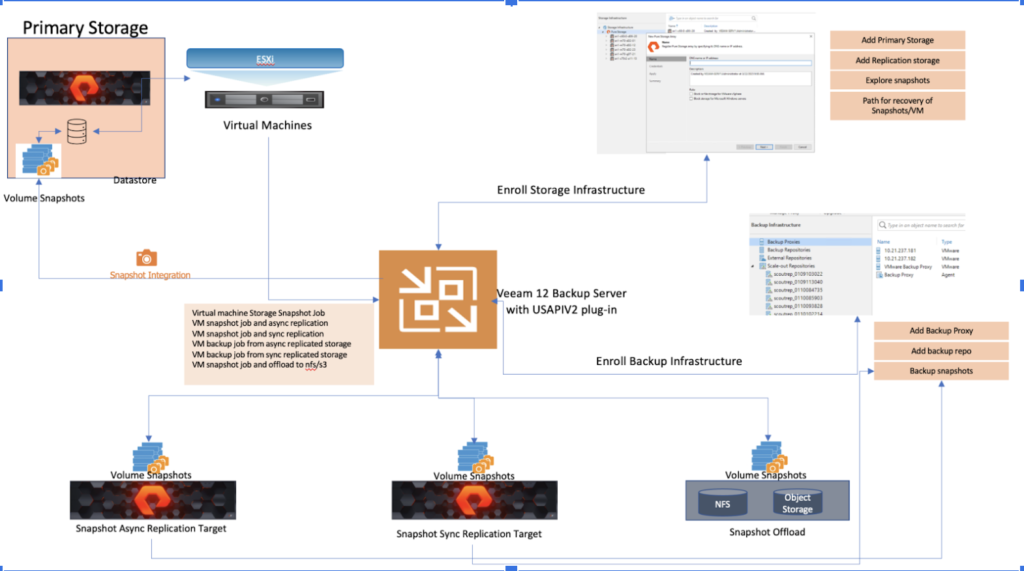

The following diagram illustrates the Veeam Backup & Replication server deployment specifically focusing on the Universal Storage Application Programming Interface Version 2 (USAPIv2) integration.

Use Case 1: Performing Snapshot-only jobs and replicating them to secondary storage (async replication)

Description

The snapshot-only jobs create a chain of storage snapshots on the primary storage array and optionally perform the replication to the secondary Pure storage array for longer retention and for disaster recovery purposes. To successfully run the snapshot-only jobs and asynchronous replication of the snapshots to another Pure Storage FlashArray, it is required to first configure the asynchronous replication and protection group setup of the primary storage FlashArray.

Value Proposition

The following are business implications from Veeam replication to secondary storage:

- Limits the IO and network bandwidth on the Primary storage and snapshot backups can be performed from the replica target array.

- Ability to restore to primary storage using reverse replication of snapshots to the original source.

- Replication snapshot can be converted into a new volume and can be exposed as a new Datastore to service dev/test purposes.

- Instant recovery of the virtual machine from the replica target array

For more information on this use case check this blog and video

Use Case 2: Performing backup from the snapshot replicated to the secondary storage (asynchronous replication)

Description

This is the extension of the Veeam snapshot replication job to the secondary array, where the backups will be executed from the snapshots on the secondary storage. This requires the Veeam backup job to be configured on the VBR server and requires selecting secondary storage target as the data source to perform the backup from the replicated storage snapshot.

Value Proposition

The following are the business implications of Veeam replication to secondary storage:

- Limit the IO and network bandwidth on the Primary storage by performing a backup from replicated snapshots on the secondary array.

- Restore to primary storage from backup copies.

- Long-term retention on backup target for instant/Full and File level recovery.

Use Case 3: Veeam backup from secondary storage with synchronous replication on FlashArray

Description

Backup from snapshots on secondary storage arrays is similar to Backup from Storage Snapshots on the primary storage array. The algorithm slightly differs for snapshot transfer and synchronous replication. Veeam Backup & Replication analyzes which VMs in the job host their disks on the storage system and checks the backup infrastructure to detect if there is a backup proxy that has a direct connection to the storage system. In this use case, you can use the secondary storage array as a data source for backup. Backup from snapshots on secondary storage arrays reduces the impact on the production storage. During backup, operations on VM data reading are performed on the side of the secondary storage array, and the primary storage array is not affected.

Value Proposition

The following are the business implications of Veeam backup from secondary storage:

- Limit the IO and network bandwidth on the Primary storage by performing a backup from replicated snapshots on the secondary array.

Use Case 4: Performing snapshot offload to FlashBlade NFS

Description

FlashArray snapshots are used for data protection, test/dev, cloning, and replication workflows. Snapshot offload to NFS extends the functionality by adding the ability to move snapshots off the FlashArray on the NFS storage target. Encapsulation of metadata along with the data blocks makes these snapshots truly portable, so they can be offloaded from Pure FlashArray to any heterogeneous NFS storage target, and are recoverable to any Pure FlashArray appliance.

Some examples of NFS storage targets that can be used with snapshot offload to NFS are:

- The Pure Storage FlashBlade™ appliance

- Third-party NFS storage appliances

- Generic Linux servers

Value Proposition

Since Snap to NFS was built from scratch for the FlashArray, it is deeply integrated with the Purity Operating Environment, resulting in highly efficient operation. A few examples of the efficiency of Snap to NFS are as follows:

- Snap to NFS is a self-backup technology built into the FlashArray. No additional software licenses or media servers are required. There is no need to install or run a Pure software agent on the NFS target either.

- Snap to NFS preserves data compression in transit and on the NFS target, saving network bandwidth and further increasing the efficiency of inexpensive NFS storage appliances, even the ones without built-in compression

- Snap to NFS preserves data reduction across snapshots of a volume. After offloading the initial baseline snapshot of a volume, it only sends delta changes for subsequent snaps of the same volume. The snapshot differencing engine runs within the Purity Operating Environment in the FlashArray and uses a local copy of the previous snapshot to compute the delta changes. Therefore, there is no back-and-forth network traffic between the FlashArray and the offload target to compute deltas between snapshots, further reducing network congestion. As a result:

- Less space is consumed on the NFS target.

- Network utilization is minimized.

- Backup windows are much smaller.

- Snap to NFS knows which data blocks already exist on the FlashArray, so during restores, it only pulls back the missing data blocks to rebuild the complete snapshot on the FlashArray. In addition, Snap to NFS uses dedupe-preserving restores, so when data is restored from the offload target to the FlashArray, it is deduped to save precious space on the FlashArray

For more information on this use case check this blog and video.

For more information please visit purestorage.com

![]()